Poisson distribution

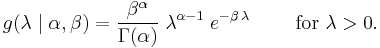

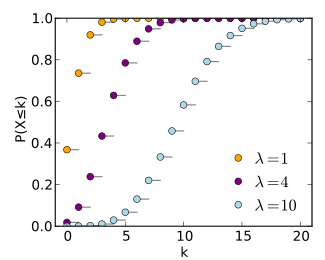

Probability mass function The horizontal axis is the index k. The function is only defined at integer values of k. The connecting lines are only guides for the eye. |

|

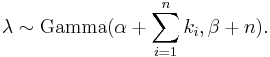

Cumulative distribution function The horizontal axis is the index k. The CDF is discontinuous at the integers of k and flat everywhere else because a variable that is Poisson distributed only takes on integer values. |

|

| notation: |  |

|---|---|

| parameters: | ╬╗ > 0 (real) |

| support: | k Ōłł {ŌĆē0, 1, 2, 3, ...ŌĆē} |

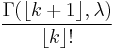

| pmf: |  |

| cdf: |  for for  or or

(where |

| mean: |  |

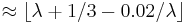

| median: |  |

| mode: |  |

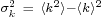

| variance: |  |

| skewness: |  |

| ex.kurtosis: |  |

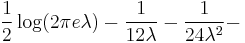

| entropy: | ![\lambda[1\!-\!\log(\lambda)]\!+\!e^{-\lambda}\sum_{k=0}^\infty \frac{\lambda^k\log(k!)}{k!}](/I/b27b6cadba5878e13c577c34813aa485.png)

(for large |

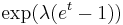

| mgf: |  |

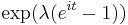

| cf: |  |

In probability theory and statistics, the Poisson distribution (pronounced [pwas╔ö╠ā]) (or Poisson law of small numbers[1]) is a discrete probability distribution that expresses the probability of a number of events occurring in a fixed period of time if these events occur with a known average rate and independently of the time since the last event. (The Poisson distribution can also be used for the number of events in other specified intervals such as distance, area or volume.)

The distribution was first introduced by Sim├®on-Denis Poisson (1781ŌĆō1840) and published, together with his probability theory, in 1838 in his work Recherches sur la probabilit├® des jugements en mati├©re criminelle et en mati├©re civile (ŌĆ£Research on the Probability of Judgments in Criminal and Civil MattersŌĆØ). The work focused on certain random variables N that count, among other things, the number of discrete occurrences (sometimes called ŌĆ£arrivalsŌĆØ) that take place during a time-interval of given length.

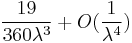

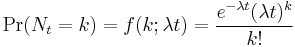

If the expected number of occurrences in this interval is  , then the probability that there are exactly k occurrences (k being a non-negative integer, k = 0, 1, 2, ...) is equal to

, then the probability that there are exactly k occurrences (k being a non-negative integer, k = 0, 1, 2, ...) is equal to

where

- e is the base of the natural logarithm (e = 2.71828...)

- k is the number of occurrences of an event - the probability of which is given by the function

- k! is the factorial of k

- ╬╗ is a positive real number, equal to the expected number of occurrences that occur during the given interval. For instance, if the events occur on average 4 times per minute, and one is interested in probability for k times of events occurring in a 10 minute interval, one would use as the model a Poisson distribution with ╬╗ = 10├Ś4 = 40.

As a function of k, this is the probability mass function. The Poisson distribution can be derived as a limiting case of the binomial distribution.

The Poisson distribution can be applied to systems with a large number of possible events, each of which is rare. A classic example is the nuclear decay of atoms.

The Poisson distribution is sometimes called a Poissonian, analogous to the term Gaussian for a Gauss or normal distribution.

Contents |

Poisson noise and characterizing small occurrences

The parameter ╬╗ is not only the mean number of occurrences  , but also its variance

, but also its variance  (see Table). Thus, the number of observed occurrences fluctuates about its mean ╬╗ with a standard deviation

(see Table). Thus, the number of observed occurrences fluctuates about its mean ╬╗ with a standard deviation  . These fluctuations are denoted as Poisson noise or (particularly in electronics) as shot noise.

. These fluctuations are denoted as Poisson noise or (particularly in electronics) as shot noise.

The correlation of the mean and standard deviation in counting independent, discrete occurrences is useful scientifically. By monitoring how the fluctuations vary with the mean signal, one can estimate the contribution of a single occurrence, even if that contribution is too small to be detected directly. For example, the charge e on an electron can be estimated by correlating the magnitude of an electric current with its shot noise. If N electrons pass a point in a given time t on the average, the mean current is I = eN / t; since the current fluctuations should be of the order  (i.e. the standard deviation of the Poisson process), the charge e can be estimated from the ratio

(i.e. the standard deviation of the Poisson process), the charge e can be estimated from the ratio  . An everyday example is the graininess that appears as photographs are enlarged; the graininess is due to Poisson fluctuations in the number of reduced silver grains, not to the individual grains themselves. By correlating the graininess with the degree of enlargement, one can estimate the contribution of an individual grain (which is otherwise too small to be seen unaided). Many other molecular applications of Poisson noise have been developed, e.g., estimating the number density of receptor molecules in a cell membrane.

. An everyday example is the graininess that appears as photographs are enlarged; the graininess is due to Poisson fluctuations in the number of reduced silver grains, not to the individual grains themselves. By correlating the graininess with the degree of enlargement, one can estimate the contribution of an individual grain (which is otherwise too small to be seen unaided). Many other molecular applications of Poisson noise have been developed, e.g., estimating the number density of receptor molecules in a cell membrane.

Related distributions

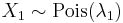

- If

and

and  then the difference

then the difference  follows a Skellam distribution.

follows a Skellam distribution. - If

and

and  are independent, and

are independent, and  , then the distribution of

, then the distribution of  conditional on

conditional on  is a binomial. Specifically,

is a binomial. Specifically,  . More generally, if X1, X2,..., Xn are independent Poisson random variables with parameters ╬╗1, ╬╗2,..., ╬╗n then

. More generally, if X1, X2,..., Xn are independent Poisson random variables with parameters ╬╗1, ╬╗2,..., ╬╗n then

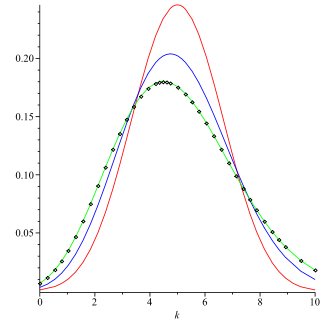

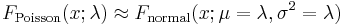

- The Poisson distribution can be derived as a limiting case to the binomial distribution as the number of trials goes to infinity and the expected number of successes remains fixed ŌĆö see law of rare events below. Therefore it can be used as an approximation of the binomial distribution if n is sufficiently large and p is sufficiently small. There is a rule of thumb stating that the Poisson distribution is a good approximation of the binomial distribution if n is at least 20 and p is smaller than or equal to 0.05, and an excellent approximation if n Ōēź 100 and np Ōēż 10.[2]

- For sufficiently large values of ╬╗, (say ╬╗>1000), the normal distribution with mean ╬╗ and variance ╬╗ (standard deviation

), is an excellent approximation to the Poisson distribution. If ╬╗ is greater than about 10, then the normal distribution is a good approximation if an appropriate continuity correction is performed, i.e., P(X Ōēż x), where (lower-case) x is a non-negative integer, is replaced by P(X Ōēż x + 0.5).

), is an excellent approximation to the Poisson distribution. If ╬╗ is greater than about 10, then the normal distribution is a good approximation if an appropriate continuity correction is performed, i.e., P(X Ōēż x), where (lower-case) x is a non-negative integer, is replaced by P(X Ōēż x + 0.5).

- Variance-stabilizing transformation: When a variable is Poisson distributed, its square root is approximately normally distributed with expected value of about

and variance of about 1/4.[3] Under this transformation, the convergence to normality is far faster than the untransformed variable. Other, slightly more complicated, variance stabilizing transformations are available,[4] one of which is Anscombe transform. See Data transformation (statistics) for more general uses of transformations.

and variance of about 1/4.[3] Under this transformation, the convergence to normality is far faster than the untransformed variable. Other, slightly more complicated, variance stabilizing transformations are available,[4] one of which is Anscombe transform. See Data transformation (statistics) for more general uses of transformations. - If the number of arrivals in a given time interval

![[0,t]](/I/21b8fce671acf5fa4690193ad7ef3461.png) follows the Poisson distribution, with mean =

follows the Poisson distribution, with mean =  , then the lengths of the inter-arrival times follow the Exponential distribution, with mean

, then the lengths of the inter-arrival times follow the Exponential distribution, with mean  .

.

Occurrence

The Poisson distribution arises in connection with Poisson processes. It applies to various phenomena of discrete properties (that is, those that may happen 0, 1, 2, 3, ... times during a given period of time or in a given area) whenever the probability of the phenomenon happening is constant in time or space. Examples of events that may be modelled as a Poisson distribution include:

- The number of soldiers killed by horse-kicks each year in each corps in the Prussian cavalry. This example was made famous by a book of Ladislaus Josephovich Bortkiewicz (1868ŌĆō1931).

- The number of phone calls at a call centre per minute.

- Under an assumption of homogeneity, the number of times a web server is accessed per minute.

- The number of mutations in a given stretch of DNA after a certain amount of radiation.

- The proportion of cells that will be infected at a given multiplicity of infection.

How does this distribution arise? ŌĆö The law of rare events

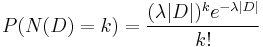

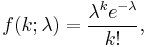

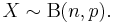

In several of the above examplesŌĆöfor example, the number of mutations in a given sequence of DNAŌĆöthe events being counted are actually the outcomes of discrete trials, and would more precisely be modelled using the binomial distribution, that is

In such cases n is very large and p is very small (and so the expectation np is of intermediate magnitude). Then the distribution may be approximated by the less cumbersome Poisson distribution

This is sometimes known as the law of rare events, since each of the n individual Bernoulli events rarely occurs. The name may be misleading because the total count of success events in a Poisson process need not be rare if the parameter np is not small. For example, the number of telephone calls to a busy switchboard in one hour follows a Poisson distribution with the events appearing frequent to the operator, but they are rare from the point of the average member of the population who is very unlikely to make a call to that switchboard in that hour.

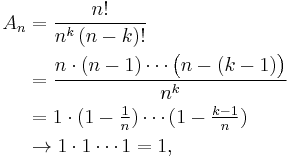

Proof

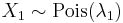

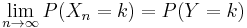

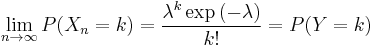

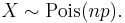

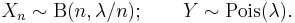

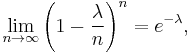

We will prove that, for fixed  , if

, if

then for each fixed k

.

.

To see the connection with the above discussion, for any Binomial random variable with large n and small p set  . Note that the expectation

. Note that the expectation  is fixed with respect to n.

is fixed with respect to n.

First, recall from calculus

then since  in this case, we have

in this case, we have

Next, note that

where we have taken the limit of each of the terms independently, which is permitted since there is a fixed number of terms with respect to n (there are k of them). Consequently, we have shown that

.

.

Generalization

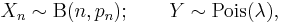

We have shown that if

where  , then

, then  in distribution. This holds in the more general situation that

in distribution. This holds in the more general situation that  is any sequence such that

is any sequence such that

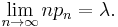

2-dimensional Poisson process

where

- e is the base of the natural logarithm (e = 2.71828...)

- k is the number of occurrences of an event - the probability of which is given by the function

- k! is the factorial of k

- D is the 2-dimensional region

- |D| is the area of the region

- N(D) is the number of points in the process in region D

Properties

- The expected value of a Poisson-distributed random variable is equal to ╬╗ and so is its variance. The higher moments of the Poisson distribution are Touchard polynomials in ╬╗, whose coefficients have a combinatorial meaning. In fact, when the expected value of the Poisson distribution is 1, then Dobinski's formula says that the nth moment equals the number of partitions of a set of size n.

- The mode of a Poisson-distributed random variable with non-integer ╬╗ is equal to

, which is the largest integer less than or equal to ╬╗. This is also written as floor(╬╗). When ╬╗ is a positive integer, the modes are ╬╗ and ╬╗ ŌłÆ 1.

, which is the largest integer less than or equal to ╬╗. This is also written as floor(╬╗). When ╬╗ is a positive integer, the modes are ╬╗ and ╬╗ ŌłÆ 1. - Sums of Poisson-distributed random variables:

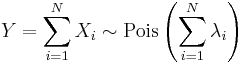

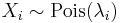

- If

follow a Poisson distribution with parameter

follow a Poisson distribution with parameter  and

and  are independent, then

are independent, then

- also follows a Poisson distribution whose parameter is the sum of the component parameters. A converse is Raikov's theorem, which says that if the sum of two independent random variables is Poisson-distributed, then so is each of those two independent random variables.

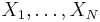

- The sum of normalised square deviations is approximately distributed as chi-square if the mean is of a moderate size (

is suggested).[5] If

is suggested).[5] If  are observations from independent Poisson distributions with means

are observations from independent Poisson distributions with means  then

then

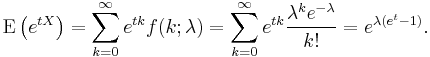

- The moment-generating function of the Poisson distribution with expected value ╬╗ is

- All of the cumulants of the Poisson distribution are equal to the expected value ╬╗. The nth factorial moment of the Poisson distribution is ╬╗n.

- The Poisson distributions are infinitely divisible probability distributions.

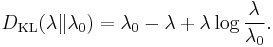

- The directed Kullback-Leibler divergence between Pois(╬╗) and Pois(╬╗0) is given by

Generating Poisson-distributed random variables

A simple way to generate random Poisson-distributed numbers is given by Knuth, see References below.

algorithm poisson random number (Knuth):

init:

Let L ŌåÉ eŌłÆ╬╗, k ŌåÉ 0 and p ŌåÉ 1.

do:

k ŌåÉ k + 1.

Generate uniform random number u in [0,1] and let p ŌåÉ p ├Ś u.

while p > L.

return k ŌłÆ 1.

While simple, the complexity is linear in ╬╗. There are many other algorithms to overcome this. Some are given in Ahrens & Dieter, see References below.

Parameter estimation

Maximum likelihood

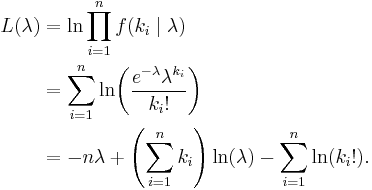

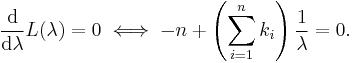

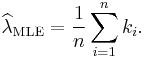

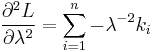

Given a sample of n measured values ki we wish to estimate the value of the parameter ╬╗ of the Poisson population from which the sample was drawn. To calculate the maximum likelihood value, we form the log-likelihood function

Take the derivative of L with respect to ╬╗ and equate it to zero:

Solving for ╬╗ yields a stationary point, which if the second derivative is negative is the maximum-likelihood estimate of ╬╗:

Checking the second derivative, it is found that it is negative for all ╬╗ and ki greater than zero, therefore this stationary point is indeed a maximum of the initial likelihood function:

Since each observation has expectation ╬╗ so does this sample mean. Therefore it is an unbiased estimator of ╬╗. It is also an efficient estimator, i.e. its estimation variance achieves the Cram├®r-Rao lower bound (CRLB). Hence it is MVUE. Also it can be proved that the sample mean is complete and sufficient statistic for ╬╗.

Bayesian inference

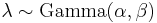

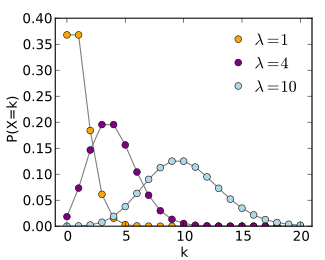

In Bayesian inference, the conjugate prior for the rate parameter ╬╗ of the Poisson distribution is the Gamma distribution. Let

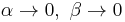

denote that ╬╗ is distributed according to the Gamma density g parameterized in terms of a shape parameter ╬▒ and an inverse scale parameter ╬▓:

Then, given the same sample of n measured values ki as before, and a prior of Gamma(╬▒, ╬▓), the posterior distribution is

The posterior mean E[╬╗] approaches the maximum likelihood estimate  in the limit as

in the limit as  .

.

The posterior predictive distribution of additional data is a Gamma-Poisson (i.e. negative binomial) distribution.

The "law of small numbers"

The word law is sometimes used as a synonym of probability distribution, and convergence in law means convergence in distribution. Accordingly, the Poisson distribution is sometimes called the law of small numbers because it is the probability distribution of the number of occurrences of an event that happens rarely but has very many opportunities to happen. The Law of Small Numbers is a book by Ladislaus Bortkiewicz about the Poisson distribution, published in 1898. Some historians of mathematics have argued that the Poisson distribution should have been called the Bortkiewicz distribution.[6]

See also

- Compound Poisson distribution

- Tweedie distributions

- Poisson process

- Poisson regression

- Poisson sampling

- Queueing theory

- Erlang distribution

- Skellam distribution

- Incomplete gamma function

- Dobinski's formula

- Robbins lemma

- Coefficient of dispersion

- ConwayŌĆōMaxwellŌĆōPoisson distribution

Notes

- Ōåæ Gullberg, Jan (1997). Mathematics from the birth of numbers. New York: W. W. Norton. pp. 963ŌĆō965. ISBN 039304002X.

- Ōåæ NIST/SEMATECH, '6.3.3.1. Counts Control Charts', e-Handbook of Statistical Methods, accessed 25 October 2006

- Ōåæ McCullagh, Peter; Nelder, John (1989). Generalized Linear Models. London: Chapman and Hall. ISBN 0-412-31760-5. page 196 gives the approximation and the subsequent terms.

- Ōåæ Johnson, N.L., Kotz, S., Kemp, A.W. (1993) Univariate Discrete distributions (2nd edition). Wiley. ISBN 0-471-54897-9, p163

- Ōåæ Box, Hunter and Hunter. Statistics for experimenters. Wiley. p. 57.

- Ōåæ Good, I. J. (1986). "Some statistical applications of Poisson's work". Statistical Science 1 (2): 157ŌĆō180. http://www.jstor.org/stable/2245435.

References

- Donald E. Knuth (1969). Seminumerical Algorithms. The Art of Computer Programming, Volume 2. Addison Wesley.

- Joachim H. Ahrens, Ulrich Dieter (1974). "Computer Methods for Sampling from Gamma, Beta, Poisson and Binomial Distributions". Computing 12 (3): 223ŌĆō246. doi:10.1007/BF02293108.

- Joachim H. Ahrens, Ulrich Dieter (1982). "Computer Generation of Poisson Deviates". ACM Transactions on Mathematical Software 8 (2): 163ŌĆō179. doi:10.1145/355993.355997.

- Ronald J. Evans, J. Boersma, N. M. Blachman, A. A. Jagers (1988). "The Entropy of a Poisson Distribution: Problem 87-6". SIAM Review 30 (2): 314ŌĆō317. doi:10.1137/1030059.

|

||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||||

|

|||||||||||

is the Incomplete gamma function and

is the Incomplete gamma function and  is the

is the

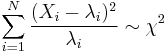

![\begin{align}

\lim_{n\to\infty} P(X_n=k)&=\lim_{n\to\infty}{n \choose k} p^k (1-p)^{n-k} \\

&=\lim_{n\to\infty}{n! \over (n-k)!k!} \left({\lambda \over n}\right)^k \left(1-{\lambda\over n}\right)^{n-k}\\

&=\lim_{n\to\infty}

\underbrace{\left[\frac{n!}{n^k\left(n-k\right)!}\right]}_{A_n}

\left(\frac{\lambda^k}{k!}\right)

\underbrace{\left(1-\frac{\lambda}{n}\right)^n}_{\to\exp\left(-\lambda\right)}

\underbrace{\left(1-\frac{\lambda}{n}\right)^{-k}}_{\to 1} \\

&= \left[ \lim_{n\to\infty} A_n \right] \left(\frac{\lambda^k}{k!}\right)\exp\left(-\lambda\right)

\end{align}](/I/4127ea0914f81678ca2b8ac4a3ef674c.png)